Modern NLP systems don’t fail because of models.

They fail because teams underestimate process rigor.

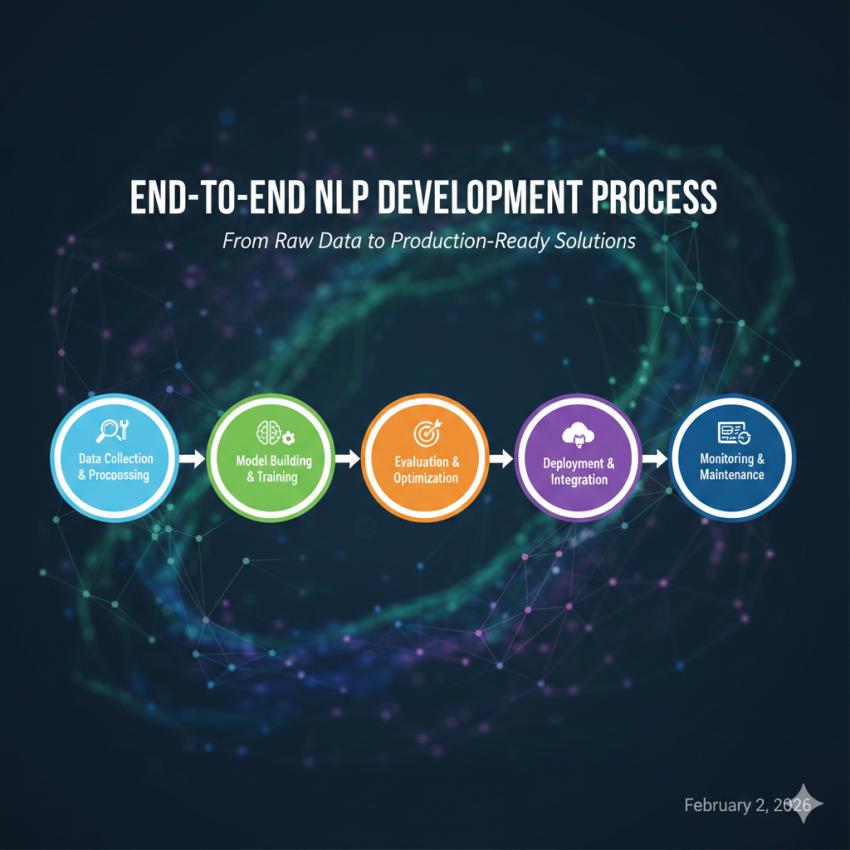

An end-to-end NLP development workflow is the difference between a demo that works once and a production system that scales, adapts, and delivers ROI. This guide breaks down how professional teams build NLP systems from raw data to live deployment and where most companies get it wrong.

What Is the NLP Development Process?

The NLP development process is a structured pipeline that transforms unstructured language data into deployable, monitored, and continuously improving AI systems.

At a production level, it includes:

Data strategy and acquisition

Data cleaning and annotation

Model selection and training

Evaluation and bias control

Deployment architecture

Monitoring, retraining, and optimization

This is the backbone of enterprise-grade NLP Development services, not just model fine-tuning.

Stage 1: Data Strategy (Where NLP Projects Are Won or Lost)

What experts do differently

Most teams start with “What model should we use?”

Experienced NLP teams start with data intent mapping.

Key decisions:

What language phenomena matter? (intent, sentiment, entities, syntax)

What failure is acceptable vs unacceptable?

What data reflects real user behavior?

Common mistake: Using generic datasets for domain-specific problems (legal, healthcare, fintech).

High-performing NLP systems are trained on behavioral data, not theoretical language samples.

Stage 2: Data Collection & Annotation

Raw data sources

Customer support logs

Product reviews

Search queries

Transcripts (calls, meetings)

Documents (PDFs, contracts, policies)

Annotation is not labeling it’s modeling reality

Annotation quality defines model ceiling.

Professional NLP teams:

Use multi-annotator consensus

Track inter-annotator agreement

Continuously refine annotation guidelines

Why this matters:

LLMs amplify annotation errors. They don’t correct them.

Stage 3: Text Preprocessing & Feature Engineering

Even with transformer models, preprocessing is not optional.

Key steps include:

Language normalization (case, punctuation, emojis)

Tokenization strategy selection

Stop-word handling (task-specific)

Domain term preservation

Advanced teams also:

Inject metadata (timestamps, user roles)

Preserve formatting signals for documents

Handle multilingual code-switching explicitly

Stage 4: Model Selection & Training

The real question isn’t “Which model?”

It’s “Which trade-offs matter?”

ConstraintModel Choice Impact

Latency: Smaller or distilled models

AccuracyFine-tuned transformers

ExplainabilityHybrid rule + ML

CostOpen-source vs API LLMs

Training approaches:

Classical ML (for constrained tasks)

Fine-tuned transformers

Retrieval-augmented generation (RAG)

Hybrid symbolic + neural systems

This is where experienced NLP Development services create differentiation not by chasing hype, but by engineering fit.

Stage 5: Evaluation, Bias & Robustness Testing

Accuracy is table stakes

Production NLP requires stress testing of language.

Evaluation goes beyond F1 score:

Edge-case handling

Adversarial prompts

Demographic bias analysis

Domain drift simulation

Expert insight:

If your NLP model hasn’t failed loudly in testing, it will fail quietly in production.

Stage 6: Deployment & Integration

NLP deployment is a systems problem

Not a data science problem.

Deployment considerations:

API vs embedded models

Real-time vs batch inference

Autoscaling and cost controls

Security and PII handling

Most enterprise NLP systems deploy as:

Microservices

Event-driven pipelines

Embedded inference layers

This is where many internal teams lean on external NLP Development services to avoid architectural debt.

Stage 7: Monitoring, Retraining & Continuous Improvement

Language changes. Users adapt. Models decay.

Production NLP requires:

Input drift detection

Output confidence monitoring

Human-in-the-loop correction

Scheduled or trigger-based retraining

Without monitoring, NLP accuracy always declines.

The only question is how fast.

What Separates Enterprise NLP from Experiments

Hobby NLP

One-off training

Static datasets

No monitoring

Model-centric thinking

Production NLP

Continuous data pipelines

Annotation feedback loops

Cost-aware inference

Business-aligned evaluation

This is why serious organizations invest in structured NLP Development services instead of isolated model builds.

Final Takeaway:

End-to-end NLP development is not about building smarter models; it’s about building systems that understand language reliably at scale.